Everything NVIDIA announced at GTC 2026

GTC 2026 marks a structural shift in AI computing: the transition from a training-centered paradigm to one dominated by large-scale inference and agentic systems.

More than isolated announcements, the event presented a cohesive architecture that integrates hardware, software, models, and infrastructure as a single operating system of intelligence.

🚀 7 courses to accelerate your career in AI

Fundamentals of Machine Learning and Artificial Intelligence (AWS)

Generative AI with Large Language Models (DeepLearningAI + AWS)

Happy learning! ✨

From the training era to the inference era

The first major insight from the event is the consolidation of inference as the dominant workload.

When models begin to reason, plan, and execute tasks, inference stops being a final step and becomes the core of the system. Every interaction requires computation. Every decision generates new tokens. And this happens continuously.

In practice, this changes the nature of workloads:

they stop being predominantly batch-based

they become simultaneously:

latency-sensitive

throughput-intensive

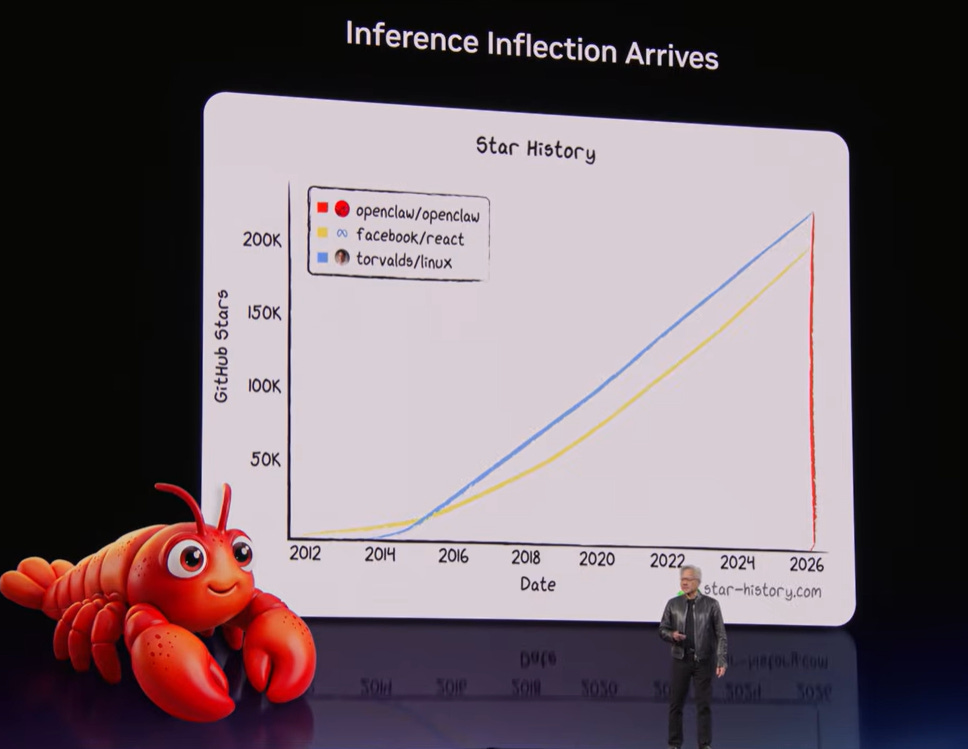

👉 This phenomenon, referred to by NVIDIA as the “inference inflection point”, indicates a structural shift in system design.

AI factories: the industrialization of intelligence

With inference at the center, data centers also change their role.

They stop being just infrastructure and begin to operate as AI factories. The logic is straightforward:

energy + hardware → tokens

Tokens stop being just model outputs and begin to represent the economic unit of AI.

This introduces a new critical metric: “tokens per watt”.

This metric synthesizes efficiency, cost, and revenue. In a scenario where energy capacity is limited, producing more tokens with the same energy becomes the main engineering objective.

👉 The result is a shift in mindset: systems are now designed as industrial pipelines for generating intelligence.

The Vera Rubin platform

Within this new context, the Vera Rubin platform should not be seen as a chip, but as a complete architecture.

It combines different types of computation into a single cohesive system:

Rubin GPUs → massive parallelism

Vera CPUs → orchestration and single-thread performance

Groq LPUs → efficient token generation

BlueField-4 → distributed memory and storage

NVLink + Spectrum-X → high-speed communication

The central point is not just power, but specialization. Each component was designed for a specific part of the problem.

This is especially relevant in agent-based systems, which require:

intensive use of KV cache

access to structured and unstructured data

continuous and adaptive execution

👉 The architecture, therefore, is not generic—it is optimized for this new type of workload.

Agent-based systems: a new abstraction

Another fundamental shift is the evolution of the software model itself.

We are moving from reactive systems to agent-based systems. This means AI doesn’t just respond—it executes. These systems are capable of:

decomposing problems

planning steps

using external tools

creating sub-agents

To support this, a new abstraction layer emerges.

OpenClaw is introduced as an operating system for AI agents. It organizes execution, manages resources, and connects models to tools and data.

But this capability brings risks. Agents can access sensitive information, execute code, and interact with external systems.

NemoClaw emerges as a response, adding:

isolation (sandboxing)

policy-based control

hybrid execution (local + cloud)

👉 In practice, we are witnessing the emergence of operating systems for AI.

Disaggregated inference and the role of Dynamo

One of the most important advances presented was the concept of disaggregated inference.

Traditionally, the same hardware runs the entire pipeline. The problem is that different stages have different characteristics. Some are memory-intensive, others latency-sensitive.

The proposed solution separates these responsibilities:

GPUs handle prefill and attention

LPUs handle token generation

This division allows each stage to be optimized with the most appropriate hardware.

Dynamo 1.0 is the software that enables this architecture. It acts as a distributed inference runtime, responsible for:

orchestrating execution across different processors

managing KV cache

optimizing scheduling

maximizing system utilization

The reported gains (up to 7× performance) show that efficiency comes not only from hardware, but from how it is coordinated.

Tokens as an economic unit

With inference at the center and agents operating continuously, tokens take on a new role: a unit of value.

This enables new pricing models based on:

latency

throughput

context size

The market tends to segment:

low-cost tokens → high throughput

premium tokens → high performance

This shift redefines the software model itself.

👉 SaaS evolves into AaaS (Agentic-as-a-Service).

Physical AI: when computation becomes data

In robotics, an additional challenge arises: the limitation of real-world data.

The physical world is complex, unpredictable, and difficult to fully capture. The adopted solution is the extensive use of simulation.

Virtual environments make it possible to generate synthetic data at scale, accelerating training and reducing risks.

This leads to an important principle:

👉 Computation starts generating data, not just processing it. This concept is central to the advancement of physical AI.

Autonomous vehicles as physical agents

Autonomous vehicles represent the convergence of these ideas.

They combine:

multimodal perception

real-time reasoning

continuous decision-making

👉 In practice, they are physical agents operating in complex environments.

The scale of this system, with millions of vehicles, requires exactly the type of infrastructure presented at GTC.

The real move: platform dominance

More than technology, GTC 2026 reveals a strategy.

NVIDIA is positioning its stack as the foundation of the entire AI economy, integrating:

cloud providers

AI companies

industry

mobility

This creates a powerful network effect. The more companies adopt the platform, the greater the dependency becomes.

👉 It is a classic platform dominance strategy, now applied to artificial intelligence.

The takeaway

GTC 2026 was not just about new products. It was about defining a new computational model.

Computing is shifting from a data processing system to a system for producing intelligence.

This implies:

new metrics (tokens per watt)

new architectures (heterogeneous and distributed)

new software models (agent-based)

new business models (AaaS)

In the end, the shift can be summarized as:

👉 We are no longer just using AI; we are operating factories of intelligence.

And whoever masters this infrastructure will play a central role in the future of technology.

Follow our page on LinkedIn for more content like this! 😉