Gpt-oss: The New Milestone from OpenAI in Open-Weight Models

OpenAI has just announced two new open-weight language models: gpt-oss-120b and gpt-oss-20b, which promise to redefine what is possible in terms of performance, accessibility, and security in the open AI ecosystem.

This initiative marks a significant return to the company’s open language models, being the first since GPT-2, now equipped with state-of-the-art technology and flexible licensing (Apache 2.0) for commercial use.

What is Gpt-oss?

Gpt-oss is an open language model designed to offer advanced reasoning, optimized tool usage, and efficient execution, providing a context window of 128k tokens.

The two versions have distinct purposes:

gpt-oss-120b → Performance comparable to o4-mini in reasoning tasks and superior in several metrics, running on a single 80 GB GPU.

gpt-oss-20b → Comparable to o3-mini, able to run locally on devices with only 16 GB of RAM, ideal for developers seeking speed and low cost.

Architecture and Technical Innovation

Both models are based on Transformers with a Mixture-of-Experts (MoE) architecture, meaning only a fraction of the parameters are activated per token, reducing computational costs without sacrificing reasoning ability.

gpt-oss-120b: 117 billion total parameters, activating 5.1 billion per token.

gpt-oss-20b: 21 billion total parameters, activating 3.6 billion per token.

Developer Features

Gpt-oss is designed for complex workflows and agentic tasks, including:

Function calling with few-shot examples

Tool usage such as online search or Python code execution

Three levels of reasoning effort (high, medium, low) to balance speed and depth

Full Chain-of-Thought (CoT) support and structured outputs

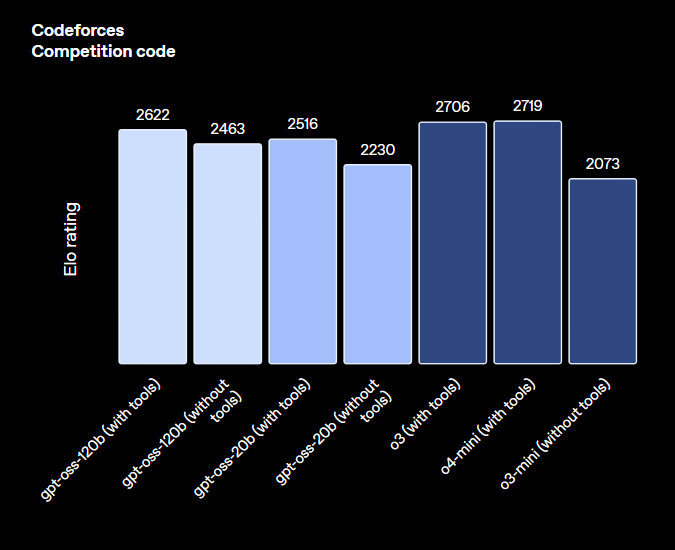

Impressive Performance

According to the authors, tests show that gpt-oss delivers impressive results:

Programming: The 120B model outperformed the o3-mini (in the “without tools” scenario) and rivaled the o4-mini.

Healthcare: Both models surpass closed models like the o3-mini in realistic scenarios.

In Competitive Math and Science datasets, both models demonstrated state-of-the-art performance.

Safety and Risk Mitigation

OpenAI applied advanced safe training practices and tested adversarially fine-tuned versions to simulate malicious use.

Even under extreme fine-tuning in sensitive areas (such as biology and cybersecurity), the models did not reach dangerous capability levels according to the company’s methodology.

Additionally, a global Red Teaming Challenge will be launched with prizes of up to $500,000 to identify vulnerabilities, reinforcing the commitment to open model safety.

Availability and How to Get Started

The model weights are already available for download on Hugging Face, quantized in MXFP4 to reduce execution costs.

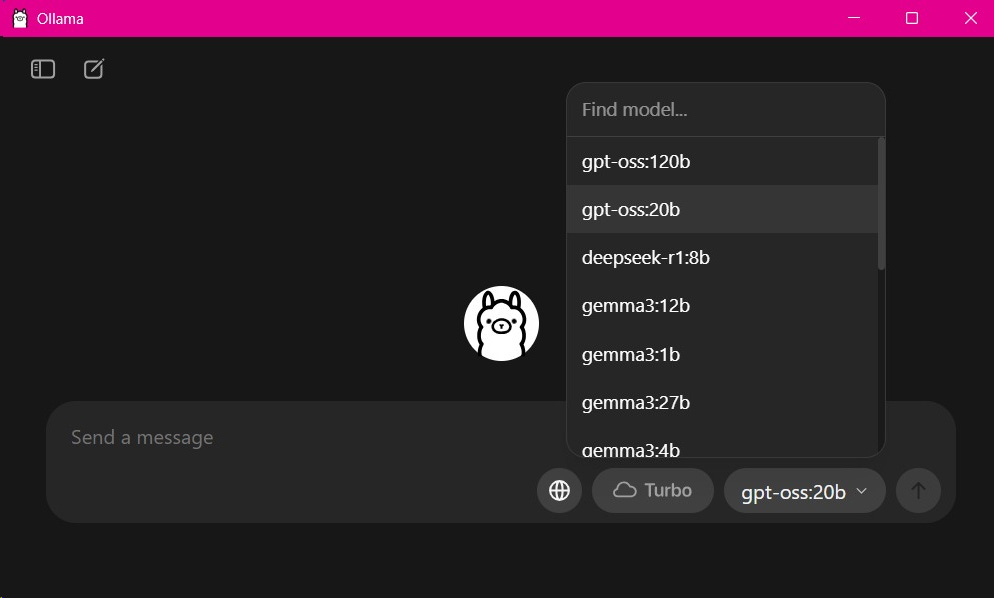

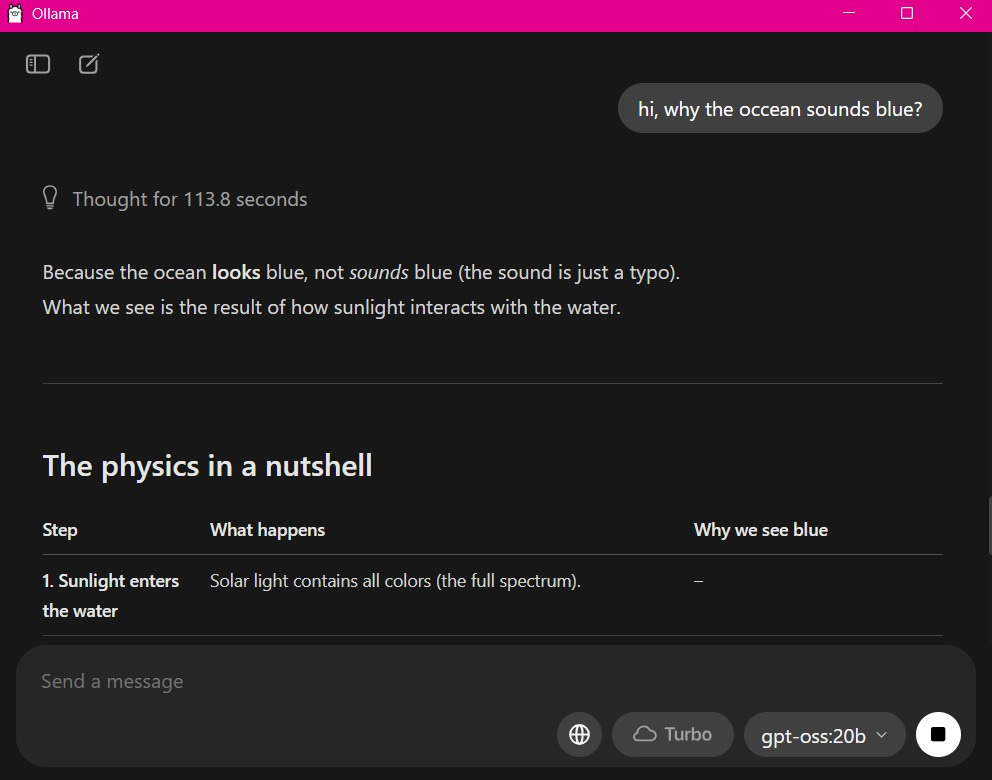

The easiest way to run the model locally is through Ollama. To do this, download and install the latest version of Ollama. Then, simply run the program and select the gpt-oss:20b model.

When you send the first question, the program will download the model locally (this may take some time, as it is 12GB!) and after that, it will be ready to respond.

🚀 You can also run it with llama_cpp! Access our notebook with a working example (Inference with gpt-oss.ipynb).

Conclusion

The release of the gpt-oss models marks a historic moment in the evolution of AI. For the first time since 2019, we have access to OpenAI’s cutting-edge language models that can be freely run, modified, and deployed.

For developers, researchers, and companies, this represents an unprecedented opportunity to innovate with state-of-the-art technology without the limitations of proprietary APIs or prohibitive costs.

Gpt-oss is not just another open model, but a strategic step by OpenAI to balance innovation and safety, providing the market with a powerful resource to create truly advanced and accessible AI solutions.