Running Models Locally with Ollama - Part 1

With Ollama, artificial intelligence is within your reach, bringing power and privacy into your hands.

In recent years, the demand for artificial intelligence and language models has grown exponentially.

As a result, the need to run these models locally has emerged, ensuring greater data control and privacy. One tool that has gained prominence in this scenario is Ollama.

In this post, we will explore how to run language models locally using Ollama and its key advantages.

What is Ollama?

Ollama is a platform that allows you to run machine learning models locally in a simple and efficient way.

With an intuitive interface, this tool facilitates access to pre-trained models and enables developers to implement their own AI solutions without relying on cloud services.

Advantages of Running Models Locally

Privacy: By running models locally, you maintain full control over your data, which is crucial when dealing with sensitive information.

Low Latency: Locally run models have faster response times (in theory, haha) since they don’t rely on cloud calls.

Cost-Effectiveness: Depending on usage, running models locally can be more economical than paying for cloud API calls.

How to Get Started with Ollama

Running models with Ollama is really simple and easy. To get started, follow the steps below:

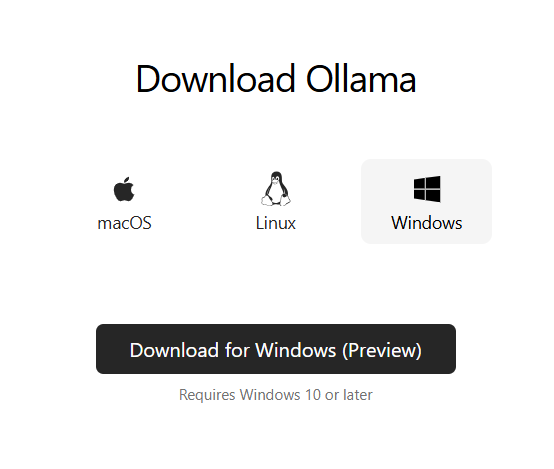

1. Installing Ollama

First, you need to install Ollama on your machine. You can do this through the terminal with the following command:

curl -sSfL https://ollama.com/download | shOr by downloading the installer directly from the website: https://ollama.com

Follow the installer instructions. On Windows, after installation, the application will appear in the taskbar at the bottom right of your screen. When you see this icon, you'll know that Ollama is running on your computer, ready to use.

2. Downloading a Model

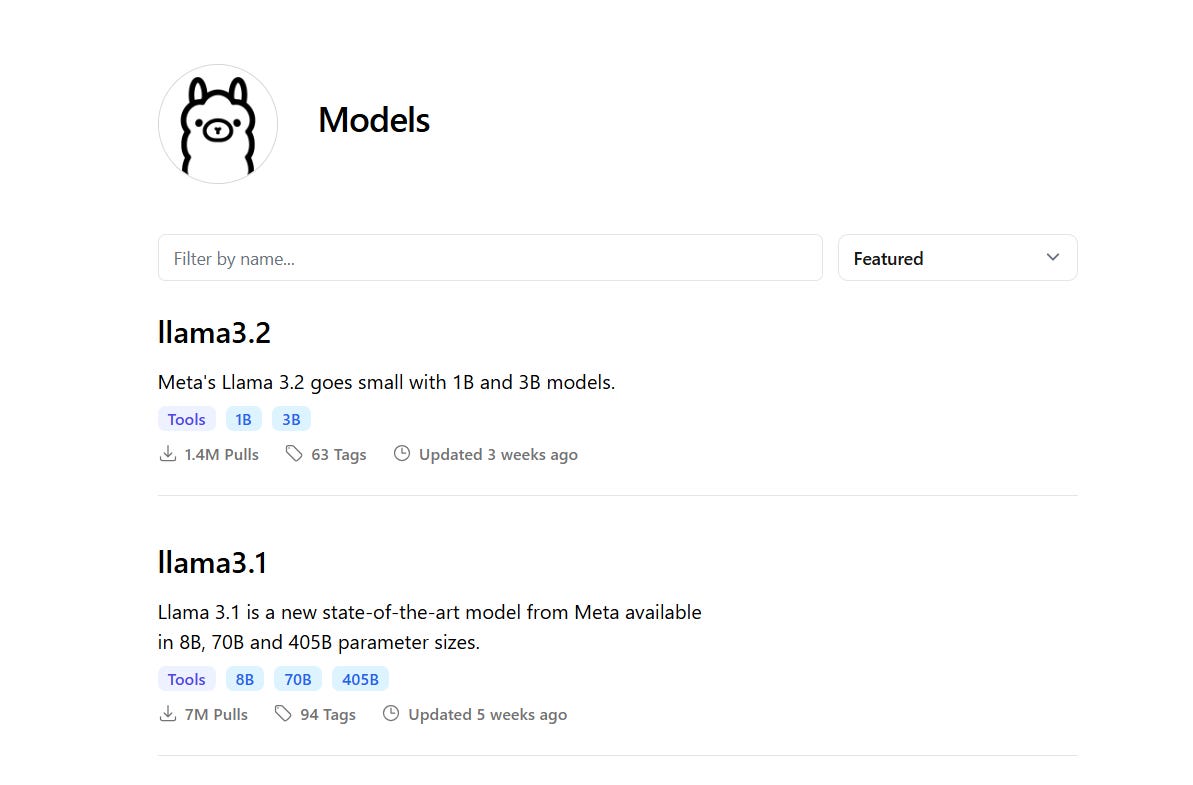

After installation, you can download a pre-trained model. For example, to download the llama3.2 model, run the following command in the terminal or Windows command prompt:

ollama run llama3.2The Llama 3.2 models from Meta are multilingual language models available in 1B and 3B parameter sizes, super lightweight and easy to run even on your laptop.

On the website, you can see the available models by clicking on Models:

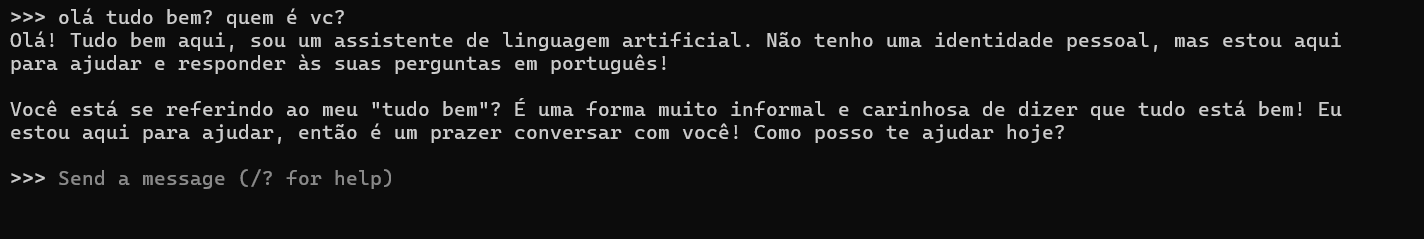

3. Running the Model

Once the model is downloaded, you can run it (inference) using the following command:

ollama run llama3.2Ollama will process your input and provide a real-time response, then wait for the next interaction.

Done! You're now chatting with your LLM on your own computer! 👏

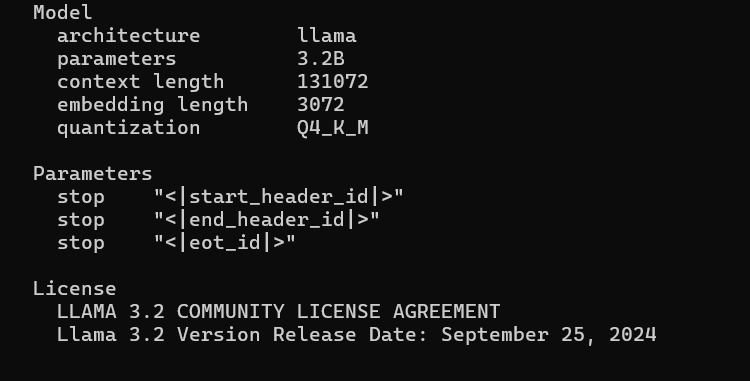

4. Model Details

To see more details about the model, just run this command:

ollama show llama3.2You'll see model details such as the number of parameters, quantization, license, etc.

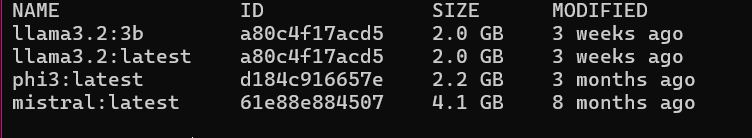

5. Listing Your Models

To view all the models you’ve downloaded, run this command:

ollama listThis command will list all the models available on your machine. This is important because models can take up a lot of disk space!

End of Part One

Running models locally with Ollama is an excellent way to leverage the power of artificial intelligence while maintaining privacy and efficiency.

With its simple installation and variety of available models, it’s a valuable tool for developers and businesses seeking innovation in their solutions.

In the next post, we’ll create a chatbot application using Ollama with Docker.

Stay tuned!! 🩷