Gemma 4: Google’s Open-Weight Model Changes the Game

Google has released Gemma 4, and the message is clear: open source doesn’t have to mean second-tier.

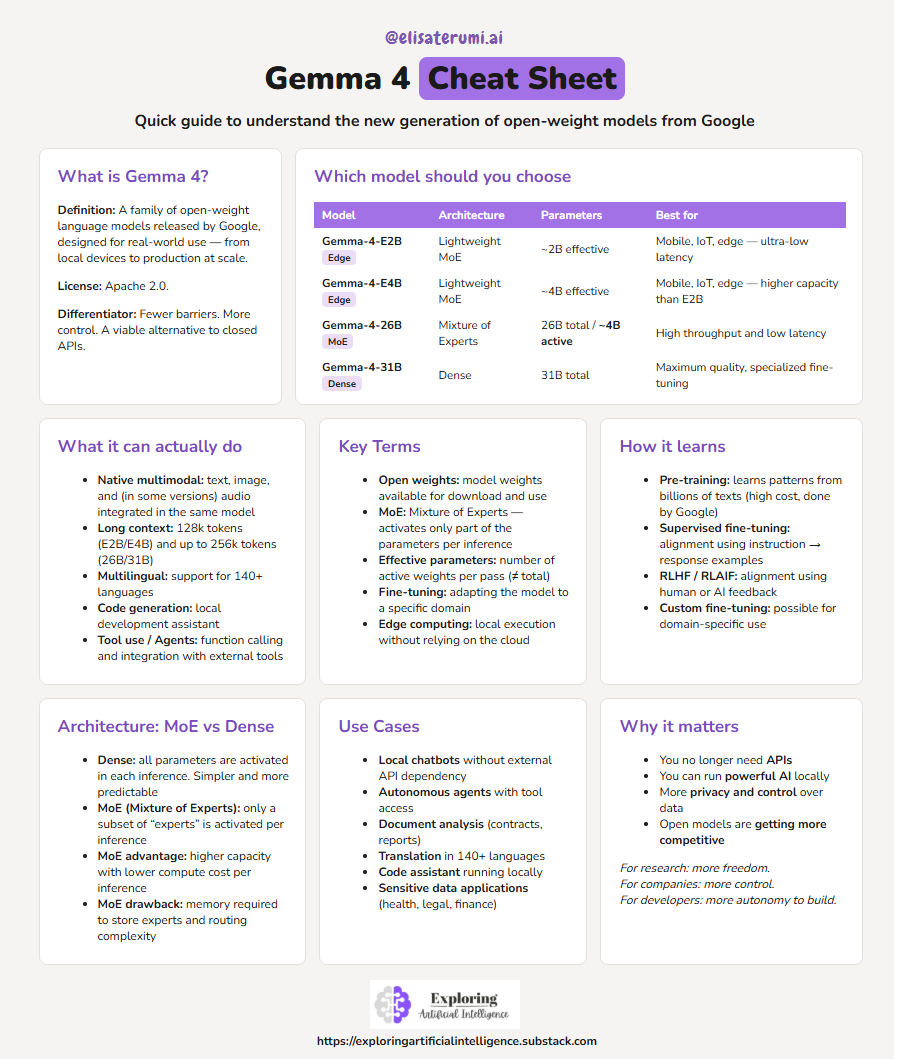

Google recently released Gemma 4, its new generation of open-weight language models.

More than just an update, it signals an important shift in how advanced models are being made available.

It’s not just about performance. It’s about access.

Follow our page on LinkedIn for more content like this! 😉

See also:

A hands-on repo showing how to move from prompts to real AI systems

Jupyter AI: Transforming the Notebook into an Intelligent Laboratory

Architecture and variants

Gemma 4 arrives with a clear goal: to deliver AI models that are efficient, scalable, and truly usable in real-world scenarios.

We’re talking about variants ranging from lightweight versions (that can run locally) to more robust models with tens of billions of parameters.

Main variants:

Gemma-4-E2B: an “effective 2B” model, designed for edge computing and devices with limited memory. The “effective” in the name indicates that the actual number of parameters active during inference is 2B, regardless of the model’s total size (a naming convention Google is adopting for smaller MoE models).

Gemma-4-31B: a dense model with 31 billion parameters, targeted at orchestration and complex reasoning tasks. It is a natural candidate for fine-tuning in specialized domains.

Gemma-4-26B-A4B: a Mixture of Experts architecture with 26B total parameters, but only 4B activated per inference pass. This reflects the classic MoE trade-off: higher throughput with controlled computational cost, at the expense of increased memory usage to load the experts.

All of this comes with support for multimodality, long context windows, 140+ languages, and fine-tuning capabilities.

Key highlights

The main advancements include:

High reasoning capability

Designed for more complex tasks, including structured reasoning and agent-based workflows.Efficiency for local execution

A lightweight version (~2B effective), optimized to run on resource-constrained devices such as laptops and edge environments.Native multimodality

Support for text, images, and, in smaller versions, even audio — all within a single model.Long context

Capable of handling large volumes of information (up to ~128k tokens in smaller versions), enabling work with long documents.Multilingual

Trained on over 140 languages, enabling global applications.Code generation

Supports both code generation and understanding, making it suitable as a local development assistant.Agent support (tool use)

Includes function calling capabilities and integration with external tools, enabling more autonomous applications.Real-world focus (edge and production)

Designed to run from local devices to production environments, with low latency and reduced cost.

Why this matters

In practice, the release of Gemma 4 means fewer barriers.

Less reliance on closed APIs. More control. More experimentation.

By adopting a permissive license (Apache 2.0), Google positions Gemma 4 as a viable alternative for real-world applications, both in research and production. It’s not just a model to experiment with — it’s a model to use.

And this puts pressure on the market.

Because, gradually, the standard is changing: powerful models no longer need to be exclusively closed.

They can be accessible. Adaptable. Runnable locally.

And this opens the door to a new generation of applications — more customized, more private, and, above all, more independent.

Practical example

🚀 Visit our Notebooks section to see a complete hands-on example of how to run Gemma 4 on Colab using Ollama.

(search for Gemma4_ollama.ipynb)

Conclusion

Gemma 4 is not just another release.

It is a clear signal of where AI is heading.

More efficient models. More accessible. And, most importantly, more under the control of those who build.

This changes the dynamics of the game.

Because, for the first time, we are not just consuming AI — we are actually operating, adapting, and integrating models into our own environments, without relying exclusively on external APIs.

And that has deep implications.

For research, it means more freedom.

For companies, more control and cost reduction.

For developers, more autonomy to create.

The future of AI will not just be more powerful.

It will be more open.

And those who start exploring this now will be ahead.