What CES 2026 Reveals About the Future of AI

Every year at the beginning of January, Las Vegas turns into a large laboratory of possible futures.

That’s where CES (Consumer Electronics Show) takes place, the world’s largest technology event, where companies present not only products, but strategic directions for the years ahead.

CES 2026 delivered a clear message: the era of “generic AI hype” is coming to an end.

The focus now is applied, integrated, and invisible AI, running close to the user and solving concrete problems.

For those working in engineering, product, data, or research, some signals deserve attention.

Follow our page on LinkedIn for more content like this! 😉

What is CES (and why it matters)

CES is not a trade show for commercial launches in the traditional sense. It works as a thermometer of technological maturity.

Many products are still prototypes. Others reach the market months later. But almost all of them reveal where the industry is investing.

Below are some highlights from the 2026 edition.

1. The shift toward on-device AI

CES 2026 presents a trend in how artificial intelligence operates on our devices: more local processing, less dependence on the cloud.

Voice assistants, home devices, wearables, and sensors are running models locally or in hybrid architectures.

For example, IAI Smart demonstrated its SmartVoice technology on Emerson devices (some already available), processing voice commands locally. The proposal is clear: lower latency, greater privacy, and reduced resource consumption in data centers.

This movement points to concrete benefits:

Faster processing

Less dependence on connectivity

Greater data privacy

Lower energy cost at scale

For those working with models, efficiency, quantization, and compression matter more than ever.

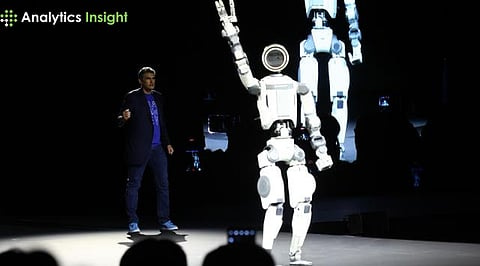

2. Robots solving specific problems

Robots have always drawn attention at CES, and 2026 brought concrete examples of real-world applications.

Highlights include:

The Dreame X50 Ultra, a robotic vacuum cleaner that navigates and cleans stairs, rotating 90 degrees to maintain stability while vacuuming each step

The Boston Dynamics Atlas, winner in the Best Robot category, a humanoid with exceptional range of motion and durability, being prepared to work in Hyundai factories

The Beatbot RoboTurtle, a robotic turtle for monitoring coral reefs and fish populations, recharged via solar panels

The pattern: robotics focused on well-defined tasks and real applications.

3. Hardware being redesigned

CES 2026 showed that AI is not only “inside” devices. It is changing their form factor.

Concrete examples:

Samsung Galaxy Z TriFold (overall winner of Best of CES 2026): a smartphone with three foldable panels that transforms into a tablet, designed for real mobile productivity

Honor Robot: a smartphone prototype with a camera mounted on an external robotic arm, overcoming internal space limitations

Dell UltraSharp 52 Thunderbolt Monitor: a single 52-inch display with 6K resolution and 120Hz, also functioning as a Thunderbolt 5 hub

Samsung R95H TV: 130 inches with Micro RGB LED technology for greater color gamut (100% of the BT.2020 color space)

Here, AI appears less as an isolated feature and more as an enabler of new form factors.

4. Coordination between personal devices

CES 2026 showed a growing variety of devices “around” the user:

Smart rings (such as Vocci AI, which records audio on demand and generates transcripts)

Earbuds with cameras (Razer Project Motoko, with dual 12MP cameras connected to AI services)

Smart collars (Satellai Collar Go, with AI monitoring animal health)

Presence sensors (Aqara FP400, detecting presence in a less invasive way than cameras)

Lenovo and Motorola introduced Qira, an operating-system-level AI platform that understands context and suggests actions across different devices. It will arrive on Lenovo devices in early 2026 and on Motorola smartphones later.

This points to a future where coordination between devices becomes just as important as the individual device.

5. Sustainability with practical application

At CES 2026, sustainability appeared in a concrete way:

Clear Drop Soft Plastic Compressor (winner in Sustainability): compacts plastic bags into bricks that can be sent for recycling, solving the problem of plastics not accepted by conventional recycling centers

Willo (winner in Energy): wireless charging technology that powers devices placed within an energy field, without pads or cables

Jackery Solar Mars Bot: a power station with retractable solar panels that autonomously moves to track the sun

Lockin V7 Max: a smart lock powered by wireless optical charging from a base station up to 4 meters away

Less green marketing. More applied engineering.

What CES 2026 tells us, in the end

The message is straightforward.

We are seeing a phase where:

AI begins to be implemented more efficiently and locally in specific use cases

Hardware and software are designed together to solve concrete problems

Robots focus on real utility rather than just impressing

Privacy, cost, and energy return to the center of design

For those building technology, CES 2026 was not about curious gadgets. It was about application, integration, and maturity.

And that, perhaps, is the strongest signal of all.

This is a sharp CES read because it highlights a shift: AI is moving from “wow demos” to integrated infrastructure on-device, bounded tasks, coordinated ecosystems, efficiency-first. That’s the product narrative.

The governance reality is that these same shifts are also liability-shaping moves.

When AI is:

on-device/hybrid,

task-constrained, and

embedded in known workflows,

it becomes more enforceable: you can define duty of care, trace decision pathways, document intended use, and assign responsibility.

In contrast, “general” and open-ended systems are harder to audit and easier to litigate because accountability collapses into ambiguity (“the model did it / the user did it / the vendor did it”).

So CES 2026 reads like a quiet convergence between engineering and enforcement: architectures that survive in market will increasingly be those that survive in discovery (documentation, logs, risk controls, post-deployment monitoring).

For emerging markets especially Africa and Kenya this matters even more, because constraints are real:

connectivity is uneven,

budgets are tighter,

device lifecycles are longer,

and regulatory capacity is still consolidating.

On-device/hybrid AI is not just a privacy story here; it’s a sovereignty and resilience story.

Local inference reduces dependency on continuous cloud access and cross-border data transfer, but it raises new cybersecurity questions: edge devices become attack surfaces, model updates become supply-chain risk, and ecosystem coordination can amplify systemic failure if not governed well.

For Kenya specifically, readiness will be less about copying “AI principles” and more about practical rails:

baseline cyber hygiene for edge deployments (device security, patching, identity, logging),

procurement rules that require auditability and incident reporting,

clear accountability lines between vendors, integrators, and institutions,

and a regulator posture that can enforce process even before it can enforce deep model specifics.

Net: CES 2026 suggests the future of AI is “closer to the user.” Governance needs to follow it there with enforceable accountability, security-by-design, and agency preserved at the point of use.

Curious how you see the enforcement side evolving: do you expect regulators to focus first on model capability, or on operational controls like auditability, incident response, and duty-of-care documentation?